Quick Summary — TL;DR

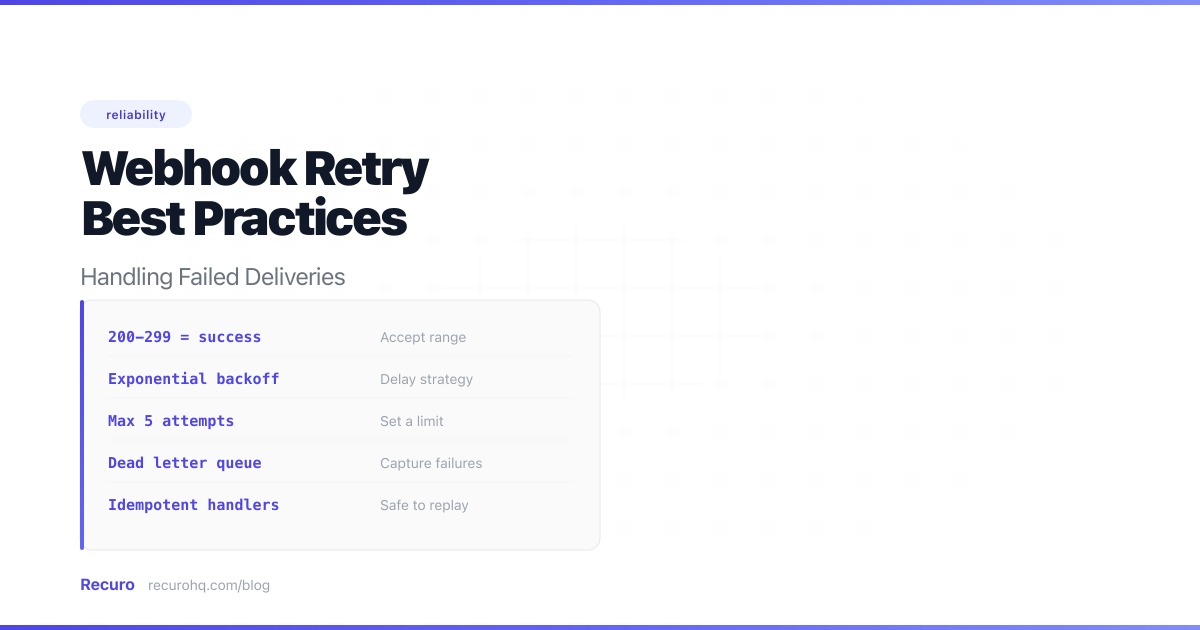

- Use exponential backoff with jitter to space out retries and avoid thundering herd problems.

- Classify errors: retry transient failures (5xx, 429, timeouts) but stop immediately on permanent errors (400, 401, 404).

- Add circuit breakers to stop retrying endpoints that have been failing for an extended period.

- Route exhausted retries to a dead letter queue so no webhook data is lost and failed events can be redelivered.

- Make webhook handlers idempotent since at-least-once delivery guarantees duplicate events will arrive.

A webhook just failed. Maybe the server returned a 503. Maybe the connection timed out. Maybe DNS couldn’t resolve. Whatever the cause, you have a decision to make: retry now, retry later, or give up.

The wrong answer is “retry immediately, as fast as possible, forever.” That turns a temporary blip into a self-inflicted DDoS. The right answer involves exponential backoff, jitter, failure classification, and knowing when to stop.

This guide focuses on webhook-specific retry patterns — circuit breakers per endpoint, dead letter queues, provider retry schedules, and the sender vs receiver distinction. For general HTTP client retry logic (retrying your own outbound API calls), see How to Retry Failed HTTP Requests with Exponential Backoff.

Why webhooks fail

Before building retry logic, understand what you’re defending against. Webhook deliveries fail for predictable reasons:

| Failure type | HTTP status | Retryable? | Typical duration |

|---|---|---|---|

| Server overloaded | 503 Service Unavailable | Yes | Seconds to minutes |

| Rate limited | 429 Too Many Requests | Yes (respect Retry-After) | Seconds to minutes |

| Gateway issues | 502 Bad Gateway | Yes | Seconds to minutes |

| Request timeout | 504 or connection timeout | Yes | Varies |

| Internal server error | 500 | Maybe | Varies |

| DNS resolution failure | Connection error | Yes | Minutes to hours |

| TLS handshake failure | Connection error | Maybe | Depends on cause |

| Bad request | 400 | No | Permanent |

| Unauthorized | 401/403 | No | Permanent until fixed |

| Not found | 404 | No | Permanent |

| Unprocessable entity | 422 | No | Permanent |

| Endpoint decommissioned | Connection refused | No | Permanent |

The key distinction: transient failures (5xx, timeouts, rate limits) resolve themselves and should be retried. Permanent failures (4xx except 408/429) indicate a bug in the request or configuration — retrying won’t help.

The 200 rule

Most webhook providers consider any response outside the 2xx range as a failure. Some treat 3xx redirects as failures too. When building a webhook sender, define your success criteria clearly:

- 2xx → success, stop retrying

- 3xx → follow redirects up to a limit (3-5 hops), then fail

- 4xx (except 408, 429) → permanent failure, don’t retry

- 408, 429 → transient, retry with backoff

- 5xx → transient, retry with backoff

- Connection error → transient, retry with backoff

Sender-side vs receiver-side: two different problems

Most articles conflate these, but they’re fundamentally different. The rest of this guide focuses primarily on sender-side retry logic — the strategies, algorithms, and infrastructure you need when you are the one delivering webhooks. If you are on the receiving side, the key takeaways are in the receiver section below.

If you’re the sender (webhook provider)

You control the retry logic. Your responsibilities:

- Implement exponential backoff with jitter

- Classify errors (retryable vs permanent)

- Respect Retry-After headers

- Use circuit breakers for unhealthy endpoints

- Route exhausted retries to a DLQ

- Provide delivery logs and manual redelivery

- Disable endpoints after sustained failures (with notification)

- Document your retry policy so consumers know what to expect

If you’re the receiver (webhook consumer)

You can’t control when retries arrive. Your responsibilities:

- Return 200 fast — respond within the provider’s timeout (5-30 seconds)

- Process asynchronously — queue the work, don’t do it inline

- Be idempotent — deduplicate by event ID

- Handle out-of-order events — don’t assume events arrive sequentially

- Verify signatures — authenticate every request

- Log everything — you’ll need it when debugging missed or duplicate events

Retry strategy 1: Exponential backoff

The foundation of every webhook retry system. Instead of retrying at fixed intervals, each subsequent attempt waits exponentially longer:

delay = base_delay × 2^(attempt - 1)With a 30-second base delay:

| Attempt | Delay | Cumulative time |

|---|---|---|

| 1st retry | 30 seconds | 30 seconds |

| 2nd retry | 1 minute | 1.5 minutes |

| 3rd retry | 2 minutes | 3.5 minutes |

| 4th retry | 4 minutes | 7.5 minutes |

| 5th retry | 8 minutes | 15.5 minutes |

| 6th retry | 16 minutes | 31.5 minutes |

| 7th retry | 32 minutes | 63.5 minutes |

| 8th retry | 64 minutes | ~2 hours |

Why exponential?

- Gives the server time to recover. A server returning 503 might need seconds (load balancer rebalancing), minutes (deployment completing), or hours (engineer fixing a bug). Exponential spacing covers all these windows.

- Reduces pressure on the failing system. The longer retries are spaced, the less load you add to an already-struggling endpoint.

- Avoids wasted compute. If a server is down for 30 minutes, there’s no point retrying 60 times during that window.

Implementation

Node.js:

async function deliverWebhook(url, payload, options = {}) { const { maxRetries = 8, baseDelay = 30_000 } = options;

for (let attempt = 0; attempt <= maxRetries; attempt++) { try { const response = await fetch(url, { method: 'POST', headers: { 'Content-Type': 'application/json' }, body: JSON.stringify(payload), signal: AbortSignal.timeout(30_000), });

if (response.ok) return { success: true, attempt };

// Don't retry client errors (except 408, 429) if (response.status < 500 && response.status !== 408 && response.status !== 429) { return { success: false, status: response.status, permanent: true }; }

// Respect Retry-After header if (response.status === 429) { const retryAfter = parseInt(response.headers.get('Retry-After') || '0'); if (retryAfter > 0) { await sleep(retryAfter * 1000); continue; } } } catch (error) { // Connection errors are retryable if (attempt === maxRetries) { return { success: false, error: error.message }; } }

if (attempt < maxRetries) { const delay = baseDelay * Math.pow(2, attempt); await sleep(delay); } }

return { success: false, retriesExhausted: true };}

function sleep(ms) { return new Promise(resolve => setTimeout(resolve, ms));}Python:

import timeimport requests

def deliver_webhook(url, payload, max_retries=8, base_delay=30): for attempt in range(max_retries + 1): try: response = requests.post(url, json=payload, timeout=30)

if response.ok: return {"success": True, "attempt": attempt}

if response.status_code < 500 and response.status_code not in (408, 429): return {"success": False, "status": response.status_code, "permanent": True}

if response.status_code == 429: retry_after = int(response.headers.get("Retry-After", 0)) if retry_after > 0: time.sleep(retry_after) continue

except requests.exceptions.RequestException: if attempt == max_retries: return {"success": False, "error": "connection_failed"}

if attempt < max_retries: delay = base_delay * (2 ** attempt) time.sleep(delay)

return {"success": False, "retries_exhausted": True}PHP:

function deliverWebhook(string $url, array $payload, int $maxRetries = 8, int $baseDelay = 30): array{ for ($attempt = 0; $attempt <= $maxRetries; $attempt++) { try { $response = Http::timeout(30)->post($url, $payload);

if ($response->successful()) { return ['success' => true, 'attempt' => $attempt]; }

if ($response->status() < 500 && !in_array($response->status(), [408, 429])) { return ['success' => false, 'status' => $response->status(), 'permanent' => true]; }

if ($response->status() === 429) { $retryAfter = (int) $response->header('Retry-After', 0); if ($retryAfter > 0) { sleep($retryAfter); continue; } } } catch (ConnectionException $e) { if ($attempt === $maxRetries) { return ['success' => false, 'error' => $e->getMessage()]; } }

if ($attempt < $maxRetries) { sleep($baseDelay * (2 ** $attempt)); } }

return ['success' => false, 'retries_exhausted' => true];}Retry strategy 2: Adding jitter

Pure exponential backoff has a hidden problem: if 1,000 webhooks all fail at the same time (e.g., the target server goes down), they’ll all retry at the same intervals — 30s, 60s, 120s. This creates periodic spikes that can re-trigger the original failure.

Jitter adds randomness to break up these waves.

Full jitter

Randomize the delay between 0 and the calculated backoff:

delay = random(0, base_delay × 2^attempt)const delay = Math.random() * baseDelay * Math.pow(2, attempt);This spreads retries evenly across the entire backoff window. It’s AWS’s recommended approach and the best default choice.

Equal jitter

Use half the calculated delay plus a random amount up to half:

delay = (base_delay × 2^attempt) / 2 + random(0, (base_delay × 2^attempt) / 2)const calculated = baseDelay * Math.pow(2, attempt);const delay = calculated / 2 + Math.random() * (calculated / 2);This guarantees a minimum delay (half the backoff) while still adding randomness. Use this when you want a floor on your retry timing.

Decorrelated jitter

Each delay is random between the base delay and 3x the previous delay:

delay = random(base_delay, previous_delay × 3)let previousDelay = baseDelay;// In your retry loop:const delay = baseDelay + Math.random() * (previousDelay * 3 - baseDelay);previousDelay = delay;This produces more aggressive spreading for high-contention scenarios.

Which jitter to use?

| Jitter type | Min delay | Max delay | Best for |

|---|---|---|---|

| Full | 0 | Full backoff | General use (recommended) |

| Equal | Half backoff | Full backoff | When you need a delay floor |

| Decorrelated | Base delay | 3× previous | High-contention, many clients |

For most webhook systems, full jitter is the right choice. It maximizes the spread of retries with the simplest implementation.

Retry strategy 3: Predefined schedules

Some providers skip the formula and use a hardcoded retry schedule. This is more predictable and easier to communicate to users:

const RETRY_SCHEDULE = [ 10, // 10 seconds 30, // 30 seconds 120, // 2 minutes 600, // 10 minutes 1800, // 30 minutes 7200, // 2 hours 28800, // 8 hours 86400, // 24 hours];Advantages:

- Easy to document and explain to customers

- Users know exactly when retries will happen

- No surprises from exponential math producing very long delays

Disadvantages:

- No jitter (all failed webhooks follow the same schedule)

- Less flexible than computed backoff

- Harder to tune dynamically

You can combine both: use a predefined schedule as the base timing, then add jitter:

function getRetryDelay(attempt) { const scheduleDelay = RETRY_SCHEDULE[Math.min(attempt, RETRY_SCHEDULE.length - 1)]; const jitter = 0.5 + Math.random() * 0.5; // 50-100% of scheduled delay return scheduleDelay * jitter;}How major providers handle retries

No two providers retry the same way. Here’s how the big ones do it:

| Provider | Max retries | Retry window | Strategy | Timeout |

|---|---|---|---|---|

| Stripe | ~15 | 72 hours (3 days) | Exponential backoff | 30 seconds |

| GitHub | 3 | ~4 hours | Fixed intervals | 10 seconds |

| Shopify | 19 | 48 hours | Exponential backoff | 5 seconds |

| Twilio | 1 | ~15 seconds | Single retry | 15 seconds |

| SendGrid | Multiple | 72 hours | Exponential backoff | 10 seconds |

| Slack | 3 | ~30 minutes | Exponential backoff | 5 seconds |

| PayPal (IPN) | Multiple | 4 days | Increasing intervals | 20 seconds |

| Discord | 5 | ~30 minutes | Exponential backoff | 10 seconds |

Key observations:

- Most providers use a 30-second to 72-hour retry window

- Stripe is the most aggressive with 72 hours of retries

- Twilio is the most conservative with a single retry

- Timeout values range from 5 to 30 seconds — if your handler takes longer, the provider considers it a failure

What this means for your handler

If you’re consuming webhooks:

- Return 200 within the provider’s timeout window (usually 5-30 seconds)

- If processing takes longer, acknowledge immediately and process asynchronously

- Your handler must be idempotent because you will receive duplicate events

If you’re sending webhooks:

- Give consumers at least 10 seconds to respond

- Retry for at least a few hours (most transient issues resolve within that window)

- Provide a webhook delivery log so consumers can debug failures

- Offer manual redelivery for failed webhooks

Circuit breakers: When retries aren’t enough

Exponential backoff handles individual webhook failures. But what if an endpoint is down for hours or days? You’re burning resources on retries that will definitely fail.

A circuit breaker stops retrying after detecting sustained failures:

CLOSED (normal) → failures exceed threshold → OPEN (stop sending)OPEN → cooldown period passes → HALF-OPEN (try one request)HALF-OPEN → success → CLOSED | failure → OPENImplementation

class CircuitBreaker { constructor(options = {}) { this.failureThreshold = options.failureThreshold || 5; this.cooldownMs = options.cooldownMs || 300_000; // 5 minutes this.state = 'CLOSED'; this.failureCount = 0; this.lastFailureTime = null; }

async execute(fn) { if (this.state === 'OPEN') { if (Date.now() - this.lastFailureTime > this.cooldownMs) { this.state = 'HALF_OPEN'; } else { throw new Error('Circuit breaker is OPEN'); } }

try { const result = await fn(); this.onSuccess(); return result; } catch (error) { this.onFailure(); throw error; } }

onSuccess() { this.failureCount = 0; this.state = 'CLOSED'; }

onFailure() { this.failureCount++; this.lastFailureTime = Date.now(); if (this.failureCount >= this.failureThreshold) { this.state = 'OPEN'; } }}

// Usage: one circuit breaker per endpointconst breakers = new Map();

async function deliverWithCircuitBreaker(endpointUrl, payload) { if (!breakers.has(endpointUrl)) { breakers.set(endpointUrl, new CircuitBreaker()); }

const breaker = breakers.get(endpointUrl); return breaker.execute(() => deliverWebhook(endpointUrl, payload));}A note on state: This implementation stores circuit breaker state in memory, which means it resets on every deploy and is not shared across multiple processes or servers. For production distributed systems, store the failure count, state, and last failure time in a persistent store like Redis or a database so that all processes share a consistent view of endpoint health.

When to use circuit breakers

- You’re sending webhooks to many different endpoints (multi-tenant SaaS)

- A single broken endpoint shouldn’t consume your entire retry budget

- You want to protect your infrastructure from runaway retry queues

- You need to notify users that their endpoint is unhealthy

Circuit breakers complement retries — they don’t replace them. Use retries for transient failures within a delivery attempt, and circuit breakers to manage endpoint-level health over time.

Dead letter queues

When all retries are exhausted and the circuit breaker is open, what happens to the webhook? It shouldn’t just disappear. Enter the dead letter queue (DLQ).

A DLQ is a holding area for webhooks that couldn’t be delivered. It gives you:

- Nothing is lost — failed webhooks are preserved for inspection and manual redelivery

- Debugging data — examine exactly what failed, when, and why

- Manual recovery — redeliver individual events or entire batches once the endpoint is fixed

Implementation patterns

Database-backed DLQ:

CREATE TABLE dead_letter_webhooks ( id BIGINT PRIMARY KEY AUTO_INCREMENT, endpoint_id BIGINT NOT NULL, event_type VARCHAR(255) NOT NULL, payload JSON NOT NULL, headers JSON NOT NULL, attempts INT NOT NULL DEFAULT 0, last_error TEXT, last_status INT, failed_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, redelivered_at TIMESTAMP NULL, INDEX idx_endpoint_failed (endpoint_id, failed_at));Queue-backed DLQ (SQS, RabbitMQ):

Most message queues have native DLQ support. In AWS SQS:

{ "deadLetterTargetArn": "arn:aws:sqs:us-east-1:123456789:webhook-dlq", "maxReceiveCount": 8}After 8 failed processing attempts, the message moves to the DLQ automatically.

DLQ best practices

- Set retention. Don’t keep dead letters forever. 7-30 days is typical.

- Alert on DLQ growth. A growing DLQ means something is broken. Set up alerts.

- Provide redelivery UI. Let users (or ops) view dead letters and trigger redelivery with one click.

- Include context. Store the error message, HTTP status, response body, and all retry attempt timestamps alongside the payload.

Respecting rate limits and the Retry-After header

When a server returns 429 Too Many Requests, it’s telling you to slow down. Many include a Retry-After header with either a delay in seconds or a specific datetime:

HTTP/1.1 429 Too Many RequestsRetry-After: 60Always respect this header. It overrides your backoff calculation:

if (response.status === 429) { const retryAfter = response.headers.get('Retry-After');

let delayMs; if (retryAfter) { const seconds = parseInt(retryAfter); if (isNaN(seconds)) { // It's a date string: "Wed, 21 Oct 2026 07:28:00 GMT" delayMs = new Date(retryAfter).getTime() - Date.now(); } else { delayMs = seconds * 1000; } }

// Use Retry-After delay, but cap it at your maximum const delay = Math.min(delayMs || baseDelay, MAX_DELAY); await sleep(delay);}If you’re building a webhook sender, include Retry-After in your 429 responses to help senders behave correctly.

Idempotency: handling duplicate deliveries

Retry logic means your receiver will get duplicate events. At-least-once delivery is the standard — exactly-once is extremely hard and most providers don’t attempt it.

Your handler must be idempotent: processing the same event twice produces the same result as processing it once.

Implementation

Store processed event IDs and check before acting:

async function handleWebhook(event) { // Deduplicate by event ID const existing = await db.processedWebhooks.findOne({ where: { eventId: event.id }, });

if (existing) { return { status: 200, message: 'Already processed' }; }

// Process the event inside a transaction await db.transaction(async (tx) => { await processEvent(event, tx); await tx.processedWebhooks.create({ eventId: event.id, processedAt: new Date() }); });

return { status: 200, message: 'Processed' };}Critical: The deduplication check and the business logic must happen in the same transaction. Otherwise, a crash between processing and recording creates a window for duplicates.

Clean up old records

Don’t let the deduplication table grow forever:

-- Delete records older than 7 daysDELETE FROM processed_webhooks WHERE processed_at < NOW() - INTERVAL 7 DAY;Seven days is enough — if you receive a duplicate more than a week later, something far more serious is wrong.

Monitoring and alerting

Retry logic is only useful if you know it’s working. Track these metrics:

Key metrics

| Metric | What it tells you | Alert threshold |

|---|---|---|

| Delivery success rate | Overall health | Below 99% |

| Average retries per delivery | Target endpoint health | Above 2 |

| P99 delivery latency | How long deliveries take | Above 5 minutes |

| DLQ depth | Unresolvable failures | Any growth |

| Circuit breaker trips | Endpoints that are down | Any trip |

| Retry queue depth | Backlog of pending retries | Growing trend |

Alerting strategy

Set up alerts at three levels:

- Per-endpoint: Alert the endpoint owner when their webhook starts failing. Include the error, HTTP status, and number of consecutive failures.

- Per-tenant: Alert when a customer’s overall delivery rate drops below a threshold.

- System-wide: Alert your ops team when the retry queue is growing faster than it’s draining, or when circuit breakers are tripping across many endpoints.

function checkAlertThresholds(endpoint) { if (endpoint.consecutiveFailures >= endpoint.alertThreshold) { sendAlert({ type: 'webhook_endpoint_failing', endpoint: endpoint.url, failures: endpoint.consecutiveFailures, lastError: endpoint.lastError, lastStatus: endpoint.lastStatus, }); }}A complete retry architecture

Here’s how all the pieces fit together for a production webhook delivery system:

Event occurs ↓Serialize payload + compute signature ↓Attempt delivery (HTTP POST, 30s timeout) ↓Success (2xx)? ├─ Yes → Log delivery, done └─ No → Classify error ├─ Permanent (4xx except 408/429) → Log failure, done ├─ Rate limited (429) → Read Retry-After, schedule retry └─ Transient (5xx/timeout/connection) → Schedule retry ↓ Check circuit breaker ├─ OPEN → Route to DLQ, alert endpoint owner └─ CLOSED/HALF_OPEN → Calculate delay with backoff + jitter ↓ Retries exhausted? ├─ Yes → Route to DLQ, alert endpoint owner └─ No → Enqueue retry with calculated delay ↓ (loop back to "Attempt delivery")Each component is independent and can be implemented incrementally:

- Start with basic exponential backoff (covers 90% of cases)

- Add jitter (prevents thundering herd)

- Add error classification (stops wasting retries on permanent failures)

- Add Retry-After support (plays nice with rate-limited endpoints)

- Add circuit breakers (protects your infrastructure from dead endpoints)

- Add DLQ (ensures nothing is lost)

- Add monitoring and alerting (makes failures visible)

Let Recuro handle retries for you

Recuro handles exponential backoff, jitter, failure alerts, execution history, and manual retry out of the box:

- Configurable retry policies — set max retries and backoff strategy per job or per queue

- Automatic exponential backoff with jitter to prevent thundering herd

- Failure alerts — get notified when retries are exhausted, with full execution logs

- Execution history — see every attempt with status code, response body, headers, and timing

- Manual retry — refire any failed webhook with one click

Stop building retry infrastructure.

Frequently asked questions

What is webhook retry logic?

Webhook retry logic is the mechanism that automatically re-attempts delivery of a webhook when the initial attempt fails. When a webhook endpoint returns a non-2xx status code, times out, or is unreachable, the retry system schedules another delivery attempt after a delay. Good retry logic uses exponential backoff (increasing delays between attempts), jitter (randomization to prevent thundering herd), and a maximum retry count to avoid infinite loops.

What is exponential backoff for webhooks?

Exponential backoff is a retry strategy where each subsequent attempt waits exponentially longer than the last. For example, with a 30-second base delay: the first retry waits 30 seconds, the second waits 1 minute, the third waits 2 minutes, and so on (delay = base × 2^attempt). This gives the failing endpoint progressively more time to recover while reducing load on both the sender and receiver.

How many times should you retry a webhook?

Most production systems retry 5-15 times over a window of 1-72 hours. Stripe retries up to 15 times over 72 hours. GitHub retries 3 times. A good starting point is 8 retries with a 30-second base delay and exponential backoff, which gives you about 2 hours of coverage. Adjust based on your use case: payment webhooks may need more retries than notification webhooks.

What is jitter in retry logic?

Jitter adds randomness to retry delays to prevent the 'thundering herd' problem. If 1,000 webhooks all fail at the same time and use pure exponential backoff, they all retry at the same intervals, creating periodic traffic spikes. Full jitter randomizes the delay between 0 and the calculated backoff value, spreading retries evenly. AWS recommends full jitter as the default approach for distributed retry systems.

What is a dead letter queue for webhooks?

A dead letter queue (DLQ) is a holding area for webhook events that couldn't be delivered after all retry attempts are exhausted. Instead of losing the event, it's moved to the DLQ where it can be inspected, debugged, and manually redelivered once the issue is resolved. DLQs ensure no webhook data is lost and provide visibility into persistent delivery failures.

Should I retry 4xx errors on webhooks?

Generally no. Most 4xx errors indicate a permanent problem: 400 (bad request), 401 (unauthorized), 403 (forbidden), 404 (not found), and 422 (unprocessable entity) won't resolve themselves. The two exceptions are 408 (request timeout, which is transient) and 429 (too many requests, which means you should retry after a delay). For 429, always respect the Retry-After header if present.

What is a circuit breaker for webhooks?

A circuit breaker is a pattern that stops sending webhooks to an endpoint after detecting sustained failures. It has three states: CLOSED (normal operation), OPEN (stop sending — endpoint is unhealthy), and HALF-OPEN (send one test request to check recovery). This prevents wasting resources on an endpoint that's been down for hours and protects your retry queue from growing unboundedly.

Related resources

- Webhooks vs APIs — understand the difference between push and pull models before building retry logic

- How to retry failed HTTP requests with exponential backoff — implementation deep-dive with code examples

- How to secure your webhook endpoints — signature verification, replay protection, and more

- How to test webhooks locally — 7 tools and methods for local development

- Exponential backoff — the algorithm explained

- Dead letter queue — what it is and when to use one

- Circuit breaker — the pattern for managing endpoint health

- Idempotency — why processing an event twice must be safe

- Retry policy — designing your retry strategy

- Retry delay calculator — visualize your backoff schedule