Quick Summary — TL;DR

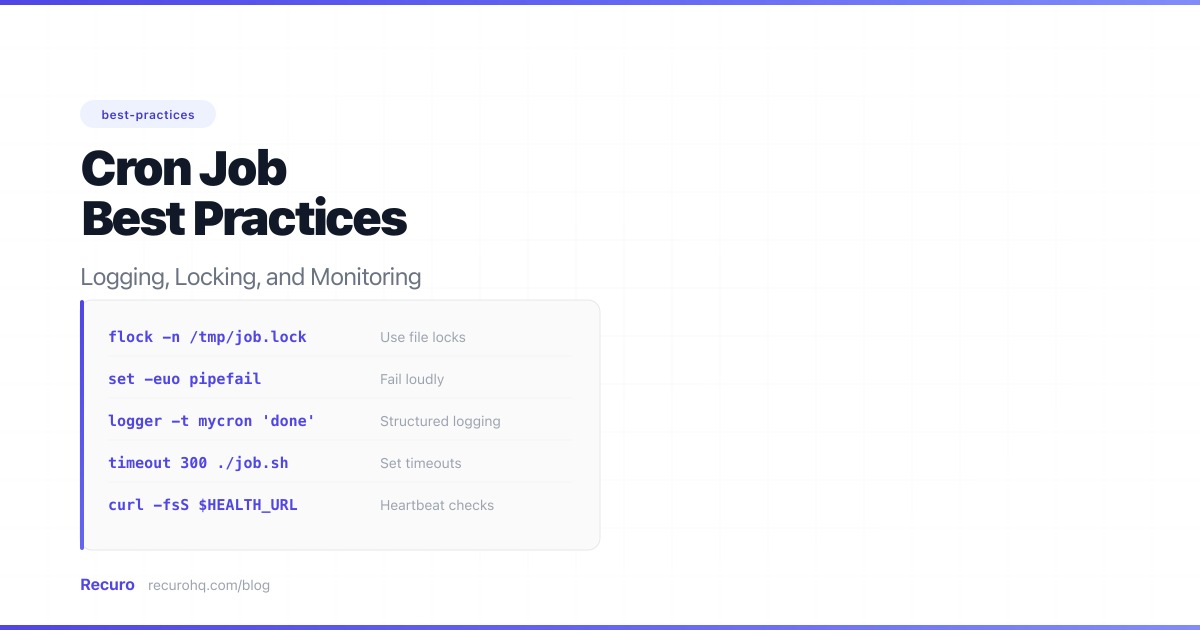

- Redirect both stdout and stderr to a log file with rotation — silent failures are the default, and they will cost you.

- Use flock to prevent overlapping runs. A long-running job that stacks on itself will consume resources and corrupt data.

- Wrap every job with timeout to kill hangs. A stuck job blocks future runs indefinitely and defeats your locking.

- Add heartbeat monitoring (dead man's switch) so you know when a job stops running entirely, not just when it fails.

- Make every job idempotent. If it can run twice safely, retries and overlaps become inconveniences instead of outages.

Most cron advice stops at the syntax: five fields, pick a schedule, deploy. That gets the job running. It does nothing to keep it running reliably. Production cron jobs need logging so failures leave a trace, locking so concurrent runs do not corrupt state, monitoring so missed executions trigger alerts, and error handling so transient failures do not require manual intervention.

This guide covers all of it with concrete code and real patterns you can apply today.

If you only implement two things, add logging and locking. Everything else in this guide is important, but these two prevent the most common production incidents. Logging means failures leave a trace instead of vanishing silently. Locking means a slow job does not stack on itself and corrupt your data. Start there, then layer on monitoring, timeouts, and the rest.

Want to see what happens when these practices are skipped? See 10 Cron Job Anti-Patterns.

Logging: make every execution visible

The cron daemon does not log your job’s output by default. On most systems, stdout and stderr are sent to the user’s local mail spool, which is almost never configured on modern servers. The result: your job fails, and nobody knows until a downstream system breaks.

Redirect stdout and stderr

The minimum viable logging setup is a redirect appended to your crontab entry:

0 * * * * /usr/bin/python3 /opt/app/sync.py >> /var/log/app/sync.log 2>&1This appends both standard output and standard error to a single log file. Without 2>&1, error messages vanish. Without >> (double arrow), each run overwrites the previous log.

If you want stdout and stderr in separate files for easier triage:

0 * * * * /opt/app/sync.sh >> /var/log/app/sync.out 2>> /var/log/app/sync.errAdd timestamps

Plain output with no timestamps is hard to correlate. Wrap your cron command to prepend a timestamp to every line:

0 * * * * /opt/app/sync.sh 2>&1 | while IFS= read -r line; do printf '[%s] %s\n' "$(date '+%Y-%m-%d %H:%M:%S')" "$line"; done >> /var/log/app/sync.logFor scripts you control, it is cleaner to add timestamps inside the script itself. In bash:

#!/bin/bashlog() { printf '[%s] %s\n' "$(date '+%Y-%m-%d %H:%M:%S')" "$*"; }

log "Starting database cleanup"/usr/bin/psql -c "DELETE FROM sessions WHERE expires_at < NOW()" mydblog "Cleanup complete, exit code: $?"Minimum viable logging

If you do not control the script or do not want to modify it, you can get timestamped stderr logging with a single crontab redirect:

0 * * * * /opt/app/sync.sh >> /var/log/app/sync.log 2>> /var/log/app/sync-$(date +\%Y-\%m-\%d).errThis sends stdout to a persistent log and stderr to a date-stamped error file. When something breaks, you check today’s .err file. No script changes required. For most teams, this plus log rotation is enough to diagnose the majority of cron failures.

Structured logging (advanced)

For jobs that feed into a log aggregator (ELK, Loki, Datadog), emit JSON instead of plain text. This makes filtering by job name, status, or duration trivial:

#!/bin/bashSTART=$(date +%s)OUTPUT=$(/opt/app/sync.sh 2>&1)EXIT_CODE=$?END=$(date +%s)DURATION=$((END - START))

printf '{"job":"sync","timestamp":"%s","duration_s":%d,"exit_code":%d,"output":"%s"}\n' \ "$(date -u '+%Y-%m-%dT%H:%M:%SZ')" "$DURATION" "$EXIT_CODE" \ "$(echo "$OUTPUT" | head -c 500 | tr '"' "'" | tr '\n' ' ')" \ >> /var/log/app/cron.jsonLog rotation

Cron logs grow forever unless you rotate them. Use logrotate to keep them manageable:

/var/log/app/sync.log { daily rotate 14 compress missingok notifempty create 0640 www-data www-data}Place this in /etc/logrotate.d/app-cron. This keeps 14 days of compressed history and prevents disk-full incidents caused by a noisy job.

Locking: prevent overlapping runs

A job scheduled to run every minute that takes three minutes to complete will stack three instances on top of each other. Database jobs will deadlock. File-processing jobs will produce duplicate output. API-calling jobs will exceed rate limits. You need locking. If you have ever had a cron job not running correctly due to overlap, this is likely why.

flock: the standard tool

flock is part of the util-linux package and is available on virtually every Linux distribution. It creates an advisory lock on a file and ensures only one instance of a command runs at a time:

* * * * * /usr/bin/flock -n /tmp/sync.lock /opt/app/sync.shThe -n flag means “non-blocking” — if the lock is already held, flock exits immediately with code 1 instead of waiting. The second invocation is silently skipped, which is exactly what you want.

For more control, use flock inside your script:

#!/bin/bashLOCKFILE="/var/lock/sync.lock"exec 200>"$LOCKFILE"

if ! flock -n 200; then echo "Another instance is already running. Exiting." exit 0fi

# Your actual job logic here/usr/bin/python3 /opt/app/sync.py

# Lock is automatically released when the script exitsUsing file descriptor 200 and exec keeps the lock for the entire duration of the script without needing a subshell.

flock with a timeout

Sometimes you want the second instance to wait briefly rather than give up immediately. The -w flag sets a timeout in seconds:

* * * * * /usr/bin/flock -w 10 /tmp/sync.lock /opt/app/sync.shThis waits up to 10 seconds for the lock. If the first instance finishes within that window, the second one proceeds. Otherwise it exits. This is useful for jobs that occasionally run a few seconds long but should not queue up indefinitely.

Advisory locks in databases

For jobs that write to a database, file locks are not always sufficient — especially if the job runs across multiple servers. Use the database’s own locking mechanism instead.

In PostgreSQL, advisory locks give you cross-process, cross-server mutual exclusion:

SELECT pg_try_advisory_lock(12345);-- Returns true if acquired, false if already held-- Run your logicSELECT pg_advisory_unlock(12345);In MySQL, the equivalent is GET_LOCK:

SELECT GET_LOCK('sync_job', 0);-- Returns 1 if acquired, 0 if already held-- Run your logicSELECT RELEASE_LOCK('sync_job');The 0 timeout makes it non-blocking, matching flock’s -n behavior.

Monitoring: know when jobs fail or stop running

Logging tells you what happened after the fact. Monitoring tells you in real time. There are two patterns that matter: execution monitoring (did the job succeed?) and heartbeat monitoring (did the job run at all?).

Execution monitoring with exit codes

Every Unix process returns an exit code. Zero means success. Anything else means failure. Your wrapper script should capture and act on this:

#!/bin/bash/opt/app/sync.shEXIT_CODE=$?

if [ $EXIT_CODE -ne 0 ]; then curl -sf -X POST https://hooks.slack.com/services/YOUR/WEBHOOK/URL \ -H 'Content-Type: application/json' \ -d "{\"text\":\"Cron job 'sync' failed with exit code $EXIT_CODE\"}"fi

exit $EXIT_CODEThis works for basic alerting, but it fires on every single failure. Transient errors — a brief network blip, a momentary 503 — do not warrant a page. Alert on consecutive failures instead (2-3 in a row). See how to monitor cron jobs and get alerts for the full pattern.

Heartbeat pings (dead man’s switch)

Exit-code monitoring misses the worst failure mode: the job that never runs at all. If the cron daemon crashes, the server reboots, or someone accidentally deletes a crontab entry, no execution happens — so there is nothing to log or alert on.

A heartbeat ping flips the model. Your job reports in after each successful run. If the monitoring system does not receive a ping within the expected window, it alerts:

0 * * * * /opt/app/sync.sh && curl -sf https://monitor.example.com/ping/sync-job-abc123 > /dev/nullThe && ensures the ping only fires on success. If the script exits non-zero, the curl never runs, and the heartbeat monitor eventually fires an alert.

For critical jobs, combine both: alert on failures immediately, and alert on missed heartbeats separately. This catches both “it ran and broke” and “it stopped running entirely.”

Tracking execution time

A job that runs successfully but takes progressively longer is a job about to time out. Log the duration and watch for trends:

#!/bin/bashSTART=$(date +%s%N)

/opt/app/sync.shEXIT_CODE=$?

END=$(date +%s%N)DURATION_MS=$(( (END - START) / 1000000 ))

echo "sync completed in ${DURATION_MS}ms with exit code $EXIT_CODE"

if [ $DURATION_MS -gt 25000 ]; then curl -sf -X POST https://hooks.slack.com/services/YOUR/WEBHOOK/URL \ -H 'Content-Type: application/json' \ -d "{\"text\":\"Warning: sync job took ${DURATION_MS}ms (threshold: 25000ms)\"}"fiError handling: exit codes, retries, and dead letters

Use meaningful exit codes

Do not exit with generic code 1 for every failure. Use distinct codes so monitoring and wrappers can differentiate between transient and permanent errors:

#!/bin/bashRESPONSE=$(curl -sf -w "%{http_code}" -o /tmp/sync_response.txt https://api.example.com/sync)

case $RESPONSE in 200|201|204) exit 0 ;; # Success 429) exit 75 ;; # Rate limited — retry later (EX_TEMPFAIL) 500|502|503) exit 75 ;; # Server error — retry later 404) exit 69 ;; # Endpoint gone — permanent failure (EX_UNAVAILABLE) *) exit 70 ;; # Unknown error (EX_SOFTWARE)esacUsing sysexits.h-style codes (65-78) makes your scripts play nicely with other Unix tools and makes log analysis meaningful.

Retry logic

For transient failures, add a simple retry loop with exponential backoff:

#!/bin/bashMAX_RETRIES=3RETRY_DELAY=5

for attempt in $(seq 1 $MAX_RETRIES); do /opt/app/sync.sh && exit 0 echo "Attempt $attempt failed, retrying in ${RETRY_DELAY}s..." sleep $RETRY_DELAY RETRY_DELAY=$((RETRY_DELAY * 2))done

echo "All $MAX_RETRIES attempts failed"exit 1This is fine for self-contained scripts. For HTTP-based scheduled jobs, a purpose-built scheduler handles retries at the platform level, which keeps retry logic out of your application code entirely.

Dead letter pattern

When a job fails permanently (all retries exhausted), do not just log and move on. Write the failed payload to a dead letter location — a file, a database table, or a queue — so it can be inspected and reprocessed later:

#!/bin/bash/opt/app/process-batch.shif [ $? -ne 0 ]; then cp /var/spool/app/current-batch.json \ "/var/spool/app/dead-letter/batch-$(date +%s).json" echo "Batch moved to dead letter queue for manual inspection"fiThis prevents data loss when a downstream service is down for an extended period. You can reprocess the dead letter queue once the issue is resolved.

Timeouts: kill jobs that hang

A cron job with no timeout can run forever. A hung database connection, a slow API, or an infinite loop will hold the lock indefinitely and block every subsequent run. The timeout command is your safety net:

0 * * * * /usr/bin/timeout 300 /opt/app/sync.shThis kills sync.sh after 300 seconds (5 minutes). If the job normally completes in 30 seconds, a 5-minute timeout gives plenty of headroom while still preventing indefinite hangs.

Combine timeout with flock to get both protections:

0 * * * * /usr/bin/timeout 300 /usr/bin/flock -n /tmp/sync.lock /opt/app/sync.sh >> /var/log/app/sync.log 2>&1Place timeout on the outside so it covers the entire execution, including time spent waiting for the lock (if you use -w instead of -n).

Inside your scripts, set HTTP-level timeouts too:

curl --max-time 30 --connect-timeout 5 -sf https://api.example.com/syncThe outer timeout is your safety net for the whole process. The inner --max-time handles the common case of a single slow request. Both are necessary.

Signal handling and graceful shutdown

When timeout kills a job, it sends SIGTERM first (and SIGKILL after a grace period). If your script creates temporary files, holds database transactions, or writes partial output, you should trap SIGTERM and clean up:

#!/bin/bashTMPFILE=$(mktemp)

cleanup() { rm -f "$TMPFILE" echo "Caught signal, cleaned up temp file" exit 1}trap cleanup SIGTERM SIGINT

# Your job logic/usr/bin/curl -sf -o "$TMPFILE" https://api.example.com/export/usr/bin/psql -c "\COPY staging FROM '$TMPFILE' CSV" mydb

rm -f "$TMPFILE"Without the trap, a killed script leaves behind temp files, half-written data, and stale lock states. The trap ensures cleanup runs whether the script finishes normally, gets killed by timeout, or is interrupted during a server shutdown.

Idempotency: safe to run twice

An idempotent job produces the same result whether it runs once or five times. This matters because cron jobs will occasionally run twice — due to overlap, retries, clock adjustments during DST transitions, or manual re-execution during incident recovery.

Practical techniques for idempotency:

- Use upserts, not inserts.

INSERT ... ON CONFLICT DO UPDATEin PostgreSQL, orINSERT ... ON DUPLICATE KEY UPDATEin MySQL, prevent duplicate rows. - Process based on state, not time. Instead of “process all records created in the last hour,” use “process all records where

status = 'pending'” and update the status atomically. - Use idempotency keys. For API calls that create resources, include a unique key derived from the input data. If the same key is submitted twice, the API returns the existing result instead of creating a duplicate.

- Delete before insert. For report generation or cache rebuilds, clear the output before writing. This makes the job a full refresh rather than an incremental append that doubles data on re-run.

If your job sends emails, charges credit cards, or triggers irreversible side effects, idempotency is not optional. It is the difference between “the job ran twice and nothing bad happened” and “we billed 10,000 customers twice.”

Environment isolation

Cron runs in a minimal shell environment. This causes the majority of “works on my machine, fails in cron” issues. For a full breakdown, see the cron job troubleshooting guide.

Set PATH explicitly

At the top of your crontab, define the PATH your jobs need:

PATH=/usr/local/bin:/usr/bin:/binSHELL=/bin/bash

0 * * * * /opt/app/sync.shOr use absolute paths for every command inside your scripts. Do not rely on shell aliases or profile-loaded paths.

Use env files for configuration

Do not hardcode credentials in crontab entries. Load them from a file:

#!/bin/bashset -euo pipefailsource /etc/app/cron.env

/usr/bin/curl -sf -H "Authorization: Bearer $API_TOKEN" \ https://api.example.com/syncThe cron.env file should be owned by root with permissions 0600. Keep it out of version control.

Set a working directory

If your script uses relative paths for anything — config files, temp directories, data files — set the working directory explicitly:

0 * * * * cd /opt/app && ./sync.sh >> /var/log/app/sync.log 2>&1Or inside the script:

#!/bin/bashcd "$(dirname "$0")" || exit 1Documentation: treat crontab like code

A crontab entry without context is a liability. Six months from now, nobody will remember why 0 3 * * 0 runs cleanup.sh or what happens if it is disabled.

Comment every entry

# Purge expired sessions from the database.# Owner: backend team. Slack: #backend-oncall# Expected duration: < 30s. Alert if > 60s.# Safe to skip: yes (next run will catch up)0 3 * * * /usr/bin/flock -n /tmp/cleanup.lock /opt/app/cleanup.sh >> /var/log/app/cleanup.log 2>&1Use a cron expression reference

If your team is not fluent in cron expressions, add the human-readable schedule in the comment. Use the cron expression generator to build and validate expressions before deploying.

Version control your crontab

Do not edit crontabs directly in production. Store them in version control and deploy them as part of your release process:

# In your deploy script:crontab /opt/app/config/crontab.txtThis gives you change history, code review, and rollback capability. Treat crontab entries with the same rigor as application configuration.

Putting it all together

A production-ready cron entry combines timeout, locking, logging, and monitoring:

PATH=/usr/local/bin:/usr/bin:/binSHELL=/bin/bash

# Sync inventory from warehouse API.# Owner: logistics team. Runs hourly. Timeout: 5 min.# Idempotent: yes (upserts by SKU). Safe to retry.0 * * * * /usr/bin/timeout 300 /usr/bin/flock -n /tmp/inventory-sync.lock /opt/app/inventory-sync.sh >> /var/log/app/inventory-sync.log 2>&1 && curl -sf https://monitor.example.com/ping/inventory-abc > /dev/nullAnd the script itself should handle signals gracefully:

#!/bin/bashset -euo pipefailsource /etc/app/cron.env

TMPFILE=$(mktemp)cleanup() { rm -f "$TMPFILE"; }trap cleanup SIGTERM SIGINT EXIT

log() { printf '[%s] %s\n' "$(date '+%Y-%m-%d %H:%M:%S')" "$*"; }

log "Starting inventory sync"curl --max-time 30 --connect-timeout 5 -sf \ -H "Authorization: Bearer $API_TOKEN" \ -o "$TMPFILE" https://api.warehouse.example.com/inventory

/usr/bin/psql -c "\COPY inventory_staging FROM '$TMPFILE' CSV HEADER" "$DATABASE_URL"/usr/bin/psql -c "CALL upsert_inventory_from_staging()" "$DATABASE_URL"

log "Sync complete"This gives you: timeout (killed after 5 minutes if it hangs), locking (flock prevents overlap), signal handling (cleanup on SIGTERM/SIGINT), logging (stdout and stderr captured with timestamps), heartbeat monitoring (ping on success), environment isolation (env file, absolute paths), and documentation (comments explain ownership and behavior).

That said, maintaining this for dozens of jobs across multiple servers gets tedious fast. Every job needs its own lock file, log rotation config, timeout tuning, heartbeat endpoint, and alerting rule. The operational surface area grows linearly with the number of jobs.

Let a scheduler handle the infrastructure

Recuro handles logging, locking, retries, and monitoring as built-in platform features. You define a cron expression and an HTTP endpoint. Every execution is logged with status, response time, and response body. Configurable alert thresholds notify you on consecutive failures, and recovery alerts tell you when a job is healthy again. No lock files to manage, no log rotation to configure, no wrapper scripts to maintain.

If you are managing more than a handful of cron jobs and spending time on the infrastructure around them rather than the jobs themselves, that is the problem Recuro solves.

Frequently asked questions

How do I prevent cron jobs from overlapping?

Use flock, which is part of util-linux and available on virtually every Linux system. Add it before your command in the crontab: /usr/bin/flock -n /tmp/myjob.lock /path/to/script.sh. The -n flag makes flock exit immediately if another instance holds the lock. For distributed systems where jobs run on multiple servers, use database advisory locks (pg_try_advisory_lock in PostgreSQL, GET_LOCK in MySQL) instead of file-based locks.

What is the best way to log cron job output?

At minimum, redirect both stdout and stderr to a file: >> /var/log/app/job.log 2>&1. For production systems, add timestamps to every log line (either in a wrapper script or inside the job itself), emit structured JSON if you use a log aggregator, and configure logrotate to prevent unbounded log growth. Never rely on cron's default mail behavior — it is almost never configured on modern servers.

How do I monitor cron jobs for failures?

Use two complementary approaches. First, check exit codes after each execution and alert on consecutive failures (2-3 in a row, not every single one, to avoid noise from transient errors). Second, use heartbeat monitoring (a dead man's switch) where the job pings a monitoring endpoint on success — if no ping arrives within the expected window, you get an alert. This catches both 'it ran and failed' and 'it stopped running entirely.'

What does it mean for a cron job to be idempotent?

An idempotent job produces the same result whether it runs once or multiple times. This matters because cron jobs can run twice due to overlapping executions, retries, or manual re-runs during incidents. Techniques include using database upserts instead of plain inserts, processing records by state rather than time window, including idempotency keys in API calls, and clearing output before writing during report generation.

How do I set a timeout on a cron job?

Use the timeout command, which is available on all modern Linux systems: timeout 300 /path/to/script.sh. This sends SIGTERM after 300 seconds, and SIGKILL shortly after if the process does not exit. Place timeout on the outside of your command chain (before flock) so it covers the entire execution. Inside your scripts, also set HTTP-level timeouts (curl --max-time 30) for individual requests. Without a timeout, a hung job blocks all future runs indefinitely.

Why does my cron job work manually but fail in cron?

Cron runs with a minimal environment — a stripped-down PATH (usually just /usr/bin:/bin), /bin/sh as the default shell, no loaded shell profile, and no working directory. Commands like python3, node, or php that live outside the default PATH will not be found. Fix this by using absolute paths for all commands, setting PATH and SHELL at the top of your crontab, sourcing environment variables from a file, and setting the working directory explicitly with cd.

How should I handle retries for failed cron jobs?

For self-contained scripts, add a retry loop with exponential backoff: retry 3 times with delays of 5, 10, and 20 seconds. Only retry transient errors (exit codes indicating server errors or rate limiting), not permanent failures like a 404. For HTTP-based scheduled jobs, a managed scheduler can handle retries at the platform level, keeping retry logic out of your application code. When all retries are exhausted, write the failed payload to a dead letter location for later inspection.