Quick Summary — TL;DR

- Cron jobs fail silently — but worse, they can stop running entirely with no one noticing. A dead man's switch (heartbeat monitor) catches this.

- Alert on consecutive failures (2-3 in a row), not every transient error. Track state across runs, not just individual exits.

- Several tools exist: Healthchecks.io (open source), Cronitor, Dead Man's Snitch, Better Stack, and Recuro (which combines scheduling + monitoring in one place).

- Track success rate, response time trends, and consecutive failures over time to catch degradation before it becomes an outage.

Cron jobs are fire-and-forget by design. When one fails, nothing happens — no error page, no stack trace, no angry user filing a ticket. The job just doesn’t run. You find out a week later when someone asks why the weekly report stopped, or when a customer complains their subscription renewal wasn’t processed, or when a database table has grown to 40GB because the nightly cleanup stopped running three weeks ago.

Why cron jobs fail silently

Traditional cron (the Unix scheduler) writes output to a local mail spool that nobody reads. If you’re still managing jobs via crontab, understanding its logging and output behavior is the first step toward better monitoring. Cloud-based schedulers often swallow errors entirely. Your cron expression may be correct, but without monitoring, you’ll never know if the job actually succeeded. Common failure modes include:

- The endpoint is down. Your server returned a 500, but no one was watching.

- The job timed out. A slow query or network hiccup caused the request to hang.

- DNS or network issues. Transient infrastructure problems that resolve themselves — but your job already missed its window.

- Deployment broke the endpoint. A new release changed a route or removed a handler. This is the most common one: you refactored a controller, renamed a route, the job that worked fine for months now 404s every hour. Everything looks fine from the outside.

In all of these cases, the scheduler did its part. The problem is that no one is watching the result. Unlike application errors, cron failures don’t surface anywhere — no logs, no user complaints, no Sentry events. They just disappear.

But there’s an even worse failure mode: the job that never runs at all.

The dead man’s switch: catching jobs that stop entirely

The failure most people search for help with isn’t “my cron job returned an error.” It’s “my cron job stopped running and I didn’t know for two weeks.” Execution-based monitoring can’t catch this — if no execution happens, there’s nothing to log and nothing to alert on.

A dead man’s switch (also called heartbeat monitoring) flips the model: instead of alerting when a job fails, it alerts when a job doesn’t check in within an expected window.

How it works

- Your cron job sends a “ping” to a monitoring endpoint after each successful run

- The monitor expects a ping at least once per interval (e.g., every hour)

- If no ping arrives within the grace period, the monitor fires an alert

# Cron job with heartbeat ping0 * * * * curl -sf https://api.example.com/sync && curl -sf https://monitor.example.com/ping/job-123This catches failures that regular monitoring misses:

- The cron daemon stopped running

- The server rebooted and cron wasn’t re-enabled

- Someone commented out the cron entry

- A deployment replaced the crontab

When to use it

Dead man’s switches are most valuable for critical jobs where silence is dangerous — billing runs, backup jobs, compliance reports. If the job not running is worse than the job failing, you need a heartbeat.

Monitoring tool landscape

You don’t have to build cron monitoring from scratch. Several tools exist, each with a different approach:

| Tool | Approach | Pricing |

|---|---|---|

| Healthchecks.io | Heartbeat/dead man’s switch. Your jobs ping an endpoint; alerts if a ping is missed. Open source, self-hostable. | Free tier (20 checks), paid from $20/mo |

| Cronitor | Heartbeat monitoring + cron expression validation. Dashboard for all your cron jobs. | Free tier (5 monitors), paid from $24/mo |

| Dead Man’s Snitch | Focused entirely on dead man’s switch monitoring. Simple, single-purpose. | Free (1 snitch), paid from $5/mo |

| Better Stack | Uptime + heartbeat monitoring (formerly Better Uptime). Part of a broader observability suite. | Free tier, paid from $24/mo |

| Recuro | Scheduling + monitoring in one tool. Runs your jobs and monitors them — execution logs, alert thresholds, and recovery notifications are built in. | Free tier, paid from $9/mo |

Most of these tools focus on the heartbeat side: your existing scheduler runs the job, and the tool watches for check-ins. Recuro takes a different approach — it’s the scheduler itself, so execution logging, failure detection, and alerting happen automatically without adding ping calls to your jobs.

If you already have a scheduler you’re happy with and just need monitoring, a dedicated heartbeat tool like Healthchecks.io or Cronitor is a good fit. If you’re setting up scheduled jobs from scratch (or tired of stitching together cron + monitoring), a combined tool removes the integration work.

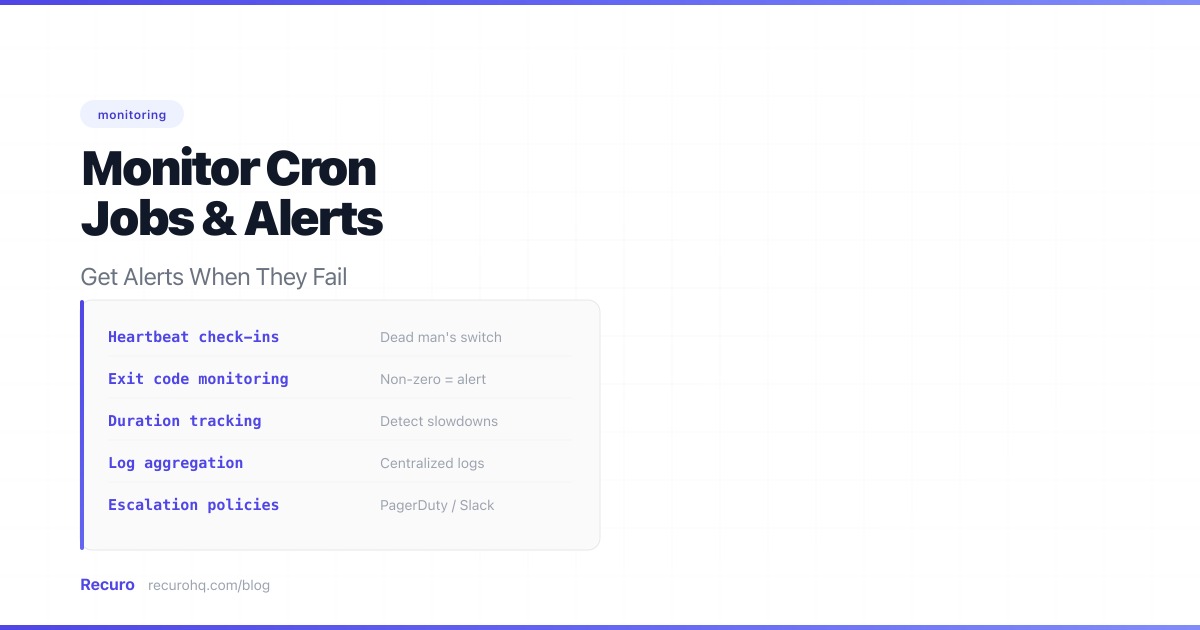

What good cron monitoring looks like

Effective monitoring for scheduled jobs has three layers:

1. Execution logging

Every run should produce a log entry with:

- Timestamp — when the job started and finished

- HTTP status code — did the endpoint respond, and with what?

- Response time — is the job getting slower over time?

- Response body (or a snippet) — useful for debugging failures

Without logs, you’re debugging blind. With them, you can answer “what happened at 3 AM?” in seconds.

2. Failure alerts

An alert should fire when:

- A job returns a non-2xx status code

- A job times out (no response within the configured window)

- A job fails N times in a row (consecutive failure threshold)

The alert threshold matters. A single transient failure isn’t worth waking someone up — but three in a row probably is. A good default is 3 consecutive failures before alerting.

3. Recovery notifications

Once a failing job starts succeeding again, you should get a recovery notification. Without it, your team keeps investigating an issue that’s already resolved.

DIY approach: tracking consecutive failures

If you’re running your own cron daemon, you can wrap each job with a monitoring script. The simplest version just checks an exit code and sends a Slack message, but that fires on every transient error. A more useful approach tracks consecutive failures using a state file:

#!/bin/bash# monitor-job.sh — wraps a cron job with consecutive failure tracking# Usage: ./monitor-job.sh <job-name> <url> [threshold]

JOB_NAME="$1"URL="$2"THRESHOLD="${3:-3}"STATE_DIR="/var/lib/cron-monitor"STATE_FILE="$STATE_DIR/$JOB_NAME.state"SLACK_WEBHOOK="https://hooks.slack.com/services/YOUR/WEBHOOK/URL"

mkdir -p "$STATE_DIR"

# Read current failure count (default 0)FAILURES=0ALERTED=0if [ -f "$STATE_FILE" ]; then FAILURES=$(sed -n '1p' "$STATE_FILE") ALERTED=$(sed -n '2p' "$STATE_FILE")fi

# Run the job with a 30-second timeoutHTTP_STATUS=$(curl -sf -o /dev/null -w "%{http_code}" --max-time 30 "$URL" 2>/dev/null)EXIT_CODE=$?

if [ $EXIT_CODE -ne 0 ] || [ "${HTTP_STATUS:0:1}" != "2" ]; then # Failure — increment counter FAILURES=$((FAILURES + 1)) echo "$FAILURES" > "$STATE_FILE" echo "$ALERTED" >> "$STATE_FILE"

if [ "$FAILURES" -ge "$THRESHOLD" ] && [ "$ALERTED" -eq 0 ]; then # Threshold reached, send alert curl -sf -X POST "$SLACK_WEBHOOK" \ -H 'Content-Type: application/json' \ -d "{\"text\":\"Cron job '$JOB_NAME' has failed $FAILURES times in a row (HTTP $HTTP_STATUS)\"}" # Mark as alerted so we don't spam echo "$FAILURES" > "$STATE_FILE" echo "1" >> "$STATE_FILE" fielse # Success — check if we need to send recovery if [ "$FAILURES" -ge "$THRESHOLD" ] && [ "$ALERTED" -eq 1 ]; then curl -sf -X POST "$SLACK_WEBHOOK" \ -H 'Content-Type: application/json' \ -d "{\"text\":\"Cron job '$JOB_NAME' has recovered after $FAILURES consecutive failures\"}" fi # Reset state echo "0" > "$STATE_FILE" echo "0" >> "$STATE_FILE"fiUse it in your crontab:

# Instead of calling the endpoint directly:0 * * * * /usr/local/bin/monitor-job.sh "db-cleanup" "https://api.example.com/cleanup" 3*/5 * * * * /usr/local/bin/monitor-job.sh "sync-orders" "https://api.example.com/sync" 2This gives you consecutive failure tracking, alert suppression (no repeated alerts for the same incident), and recovery notifications. But you’re still missing execution history, response time tracking, and a dashboard. For one or two jobs this is fine. For ten or more, you’ll want a tool that handles the plumbing.

Integrating with existing monitoring stacks

If you already run Prometheus, Datadog, or CloudWatch, you can push cron metrics into your existing observability pipeline instead of adopting a separate tool. A few common approaches:

- Prometheus Pushgateway — after each job run, push a metric like

cron_job_last_success_timestamporcron_job_duration_secondsto the Pushgateway. Then set up Alertmanager rules for staleness (e.g., alert if the timestamp is older than 2x the expected interval). - Datadog custom metrics — use the Datadog API or DogStatsD to submit

cron.job.success/cron.job.failurecounts andcron.job.durationgauges. Build monitors in Datadog the same way you would for any other service. - CloudWatch custom metrics — if you’re on AWS, push metrics via

aws cloudwatch put-metric-dataand create CloudWatch Alarms for failure thresholds or missing data points.

The upside is a unified alerting pipeline — your cron alerts route through the same PagerDuty/Opsgenie/Slack channels as everything else. The downside is that you have to instrument each job yourself and you won’t get execution logs or response body capture without more work.

Metrics to track over time

Beyond per-execution pass/fail, track trends across runs to catch slow degradation before it becomes a failure:

| Metric | What it reveals | Warning sign |

|---|---|---|

| Success rate (7-day rolling) | Overall job health | Dropping below 95% |

| P95 response time | Performance degradation | Trending upward over weeks |

| Average response time | Baseline shifts | Sudden jumps (new deployment, bigger dataset) |

| Consecutive failures | Active incidents | Any value above 0 |

| Time since last success | Staleness | Exceeds 2x the expected interval |

| Response body size | Data issues | Sudden changes (empty responses, massive payloads) |

Most of these aren’t visible from a single execution log. You need historical data and a dashboard to spot the patterns.

If a nightly report that used to take 2 seconds now takes 25, it’ll work fine today — but it’s one slow query away from timing out. You can see this in your execution logs if you look across runs instead of one at a time.

Best practices

- Set timeouts explicitly. Don’t let a hung request block your schedule. 30 seconds is a reasonable default for most HTTP endpoints.

- Use consecutive failure thresholds. Alert on 2-3 consecutive failures, not every single one. Transient errors are normal.

- Monitor response time trends. A job that’s getting slower is a job that’s about to time out.

- Keep endpoints idempotent. If a job gets retried, it shouldn’t produce duplicate side effects.

- Test your alerts. Intentionally break a job in staging to verify that alerts fire and route correctly.

Summary

Log every execution, alert on consecutive failures, notify on recovery. Use a dead man’s switch to catch the worst case — jobs that stop running entirely. Whether you pick a dedicated heartbeat monitor, plug into your existing observability stack, or use a scheduler with monitoring built in, the important thing is that someone is watching when your 3 AM billing job quietly stops working.

Frequently asked questions

What is the best way to monitor cron jobs?

Use a dead man's switch (heartbeat monitor) to detect jobs that stop running entirely, plus execution logging and consecutive failure alerts for jobs that run but fail. Tools like Healthchecks.io, Cronitor, and Recuro each handle this differently — heartbeat-only tools require your jobs to ping an endpoint, while combined schedulers like Recuro monitor automatically since they run the jobs themselves.

How do I get notified when a cron job fails?

Set up a consecutive failure threshold (2-3 failures in a row) rather than alerting on every single error. You can do this with a wrapper script that tracks state in a file, a heartbeat monitoring service, or a managed scheduler with built-in alerts. Route notifications to Slack, email, or your existing incident management tool.

What is a dead man's switch for cron jobs?

A dead man's switch (also called heartbeat monitoring) alerts you when a cron job fails to check in within an expected window. Unlike execution-based monitoring that watches for errors, a dead man's switch detects the absence of activity — catching cases where the cron daemon stopped, the server rebooted, or someone accidentally deleted the cron entry.

Should I build cron monitoring myself or use a tool?

For one or two jobs, a bash wrapper script with a state file works fine. For more than a handful, the overhead of maintaining state files, execution history, dashboards, and alert routing adds up. A dedicated tool (Healthchecks.io, Cronitor, Better Stack) or a combined scheduler like Recuro handles all of this out of the box.

How do I monitor cron jobs with Prometheus or Datadog?

Push custom metrics after each job run — for Prometheus, use the Pushgateway to record last success timestamp and duration; for Datadog, submit custom metrics via DogStatsD or the API. Then create alerts for staleness or failure thresholds. This keeps cron monitoring in your existing observability pipeline but requires per-job instrumentation.