Quick Summary — TL;DR

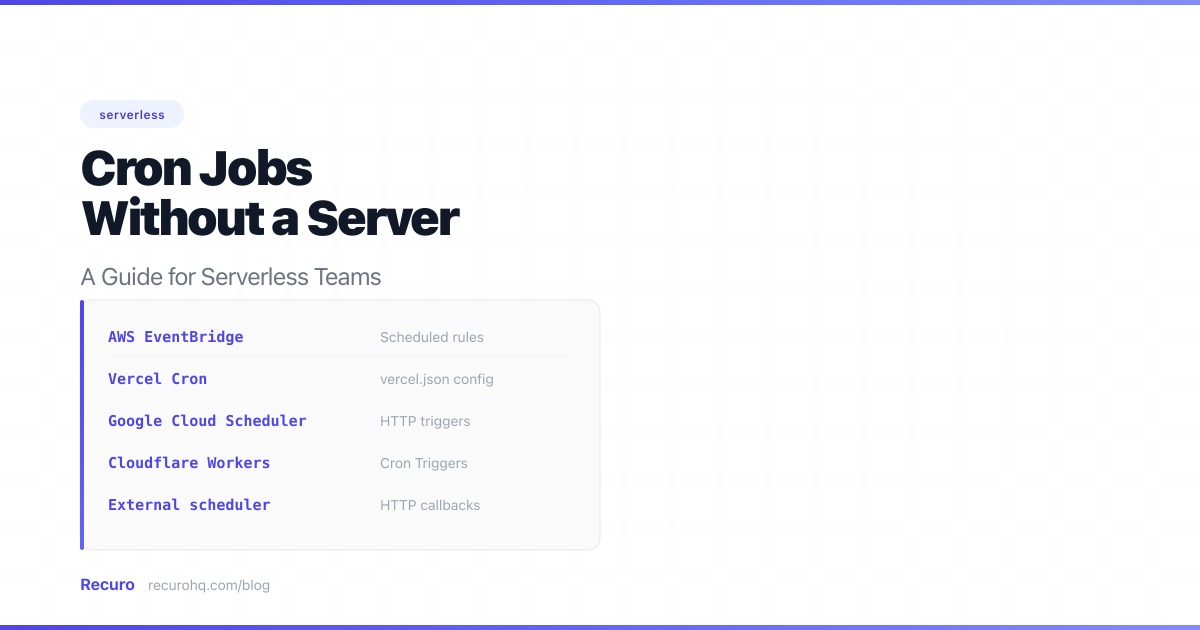

- Serverless platforms lack a persistent cron daemon, so you need an external trigger for scheduled tasks.

- For Vercel/Netlify apps, platform cron configs are the fastest path — but limited in retries, observability, and minimum frequency.

- AWS EventBridge + Lambda gives you fine-grained control if you're already on AWS, but ties your scheduling to one provider.

- An external HTTP scheduler is the most portable option: built-in retries, failure alerts, and a single dashboard across any infrastructure.

Serverless is great — until you need something to happen on a schedule. There’s no crontab on Lambda (see crontab explained for what you’d be replacing), no persistent process on Vercel, and no background worker on Cloudflare Workers. If your app needs to sync data from a third-party API every hour or generate invoices every night, you need a different approach.

This guide walks through the concrete options with real implementation examples, so you can pick the one that fits your stack and have it running today.

The scenario

Let’s ground this in a real problem. You have a Next.js app deployed on Vercel. It pulls product data from a third-party API (say, a supplier’s inventory feed) and stores it in your database. You need that data refreshed every hour so your storefront stays current.

On a traditional server, you’d add a line to your crontab and move on. On Vercel, you can’t. Here’s how to solve it.

Option 1: Vercel cron (platform-native scheduling)

If your app is on Vercel, this is the fastest path. You define cron schedules in vercel.json, and Vercel hits your API routes on that schedule.

Step 1: Create the API route

Create an API route that performs the actual work. This is the endpoint Vercel’s scheduler will call.

// app/api/sync-products/route.ts (Next.js App Router)import { NextResponse } from 'next/server';import { db } from '@/lib/db';

export const maxDuration = 60; // seconds — raise if your sync takes longer

export async function GET(request: Request) { // Verify the request is from Vercel Cron (not a random visitor) const authHeader = request.headers.get('authorization'); if (authHeader !== `Bearer ${process.env.CRON_SECRET}`) { return NextResponse.json({ error: 'Unauthorized' }, { status: 401 }); }

try { // Fetch products from supplier API const response = await fetch('https://supplier-api.example.com/v2/products', { headers: { 'X-API-Key': process.env.SUPPLIER_API_KEY! }, });

if (!response.ok) { throw new Error(`Supplier API returned ${response.status}`); }

const products = await response.json();

// Upsert products into your database let updated = 0; for (const product of products.data) { await db.product.upsert({ where: { supplierId: product.id }, update: { name: product.name, price: product.price, stock: product.stock_quantity, updatedAt: new Date(), }, create: { supplierId: product.id, name: product.name, price: product.price, stock: product.stock_quantity, }, }); updated++; }

return NextResponse.json({ synced: updated, timestamp: new Date().toISOString(), }); } catch (error) { console.error('Product sync failed:', error); return NextResponse.json( { error: 'Sync failed', detail: String(error) }, { status: 500 } ); }}Step 2: Configure the cron schedule

Add the schedule to vercel.json in your project root:

{ "crons": [ { "path": "/api/sync-products", "schedule": "0 * * * *" } ]}Step 3: Set the CRON_SECRET environment variable

In your Vercel dashboard, add a CRON_SECRET environment variable. Vercel sends this as an Authorization: Bearer <secret> header so your route can reject unauthorized calls.

Trade-offs

Pros:

- Dead simple to set up — two files and you’re done

- Lives alongside your code

- No external dependencies

Cons:

- Frequency limits — Hobby plan: once per day. Pro plan ($20/mo): once per minute

- No built-in retries — if the request fails, it’s gone. No backoff, no second attempt

- No failure alerts — you won’t know it failed unless you check Vercel logs manually

- Single project only — can’t trigger endpoints in other services or external APIs

- 10-second timeout on Hobby, 60 seconds on Pro — long-running syncs may time out

For a single Next.js project with simple scheduling needs on a Pro plan, Vercel cron works well. Once you need retries, alerts, or schedules across multiple services, you’ll outgrow it.

Option 2: AWS EventBridge + Lambda

If you’re on AWS, EventBridge Scheduler (the successor to CloudWatch Events) gives you full control over scheduled Lambda invocations. This is the right choice when you’re already invested in the AWS ecosystem and want infrastructure-as-code.

Full SAM implementation

Here’s a complete example: a Lambda function that generates and emails daily invoice summaries, triggered by EventBridge at 6 AM UTC every day.

# template.yaml (AWS SAM)AWSTemplateFormatVersion: '2010-09-09'Transform: AWS::Serverless-2016-10-31Description: Daily invoice summary generator

Globals: Function: Runtime: nodejs20.x Timeout: 120 MemorySize: 256 Environment: Variables: DB_CONNECTION_STRING: !Sub '{{resolve:secretsmanager:prod/db:SecretString:connectionString}}'

Resources: InvoiceSummaryFunction: Type: AWS::Serverless::Function Properties: Handler: src/handlers/invoice-summary.handler Description: Generates and sends daily invoice summaries Policies: - SESCrudPolicy: IdentityName: yourapp.com Events: DailySchedule: Type: ScheduleV2 Properties: ScheduleExpression: "cron(0 6 * * ? *)" Description: "Run invoice summary daily at 6 AM UTC" RetryPolicy: MaximumRetryAttempts: 2 MaximumEventAgeInSeconds: 3600

InvoiceFailureAlarm: Type: AWS::CloudWatch::Alarm Properties: AlarmName: InvoiceSummaryFailures MetricName: Errors Namespace: AWS/Lambda Dimensions: - Name: FunctionName Value: !Ref InvoiceSummaryFunction Statistic: Sum Period: 86400 EvaluationPeriods: 1 Threshold: 1 ComparisonOperator: GreaterThanOrEqualToThreshold AlarmActions: - !Sub "arn:aws:sns:${AWS::Region}:${AWS::AccountId}:ops-alerts"And the Lambda handler:

import { SESv2Client, SendEmailCommand } from '@aws-sdk/client-sesv2';import { Pool } from 'pg';

const ses = new SESv2Client({});const pool = new Pool({ connectionString: process.env.DB_CONNECTION_STRING });

interface InvoiceSummary { totalInvoices: number; totalAmount: number; overdueCount: number; overdueAmount: number;}

export async function handler(): Promise<{ statusCode: number; body: string }> { const client = await pool.connect();

try { // Query yesterday's invoice activity const result = await client.query<InvoiceSummary>(` SELECT COUNT(*) AS "totalInvoices", COALESCE(SUM(amount), 0) AS "totalAmount", COUNT(*) FILTER (WHERE due_date < NOW() AND status = 'unpaid') AS "overdueCount", COALESCE(SUM(amount) FILTER (WHERE due_date < NOW() AND status = 'unpaid'), 0) AS "overdueAmount" FROM invoices WHERE created_at >= NOW() - INTERVAL '24 hours' `);

const summary = result.rows[0];

// Send summary email via SES await ses.send(new SendEmailCommand({ FromEmailAddress: process.env.SES_FROM_EMAIL, Content: { Simple: { Subject: { Data: `Invoice Summary — ${new Date().toISOString().split('T')[0]}` }, Body: { Text: { Data: [ `Daily Invoice Summary`, `---------------------`, `New invoices: ${summary.totalInvoices}`, `Total amount: $${(summary.totalAmount / 100).toFixed(2)}`, `Overdue: ${summary.overdueCount} ($${(summary.overdueAmount / 100).toFixed(2)})`, ].join('\n'), }, }, }, }, }));

return { statusCode: 200, body: JSON.stringify({ summary, emailSent: true }), }; } finally { client.release(); }}Deploy with sam build && sam deploy --guided.

Trade-offs

Pros:

- Native integration with the AWS ecosystem

- EventBridge supports retry policies (up to 185 retries) and dead-letter queues

- Free tier covers most use cases (EventBridge: first 14M invocations free; Lambda: 1M requests/month free)

- Full IaC support — schedule lives in version control

Cons:

- Vendor lock-in — your scheduling logic is tied to AWS

- Observability requires separate setup (CloudWatch Alarms, as shown above)

- AWS cron syntax differs from standard Unix cron (six fields, mandatory

?wildcard) — see our AWS Lambda cron guide for the full syntax breakdown - Managing dozens of scheduled triggers across multiple services gets messy fast

- Deploying a SAM/CDK stack is more ceremony than most teams want for a simple hourly sync

Option 3: GitHub Actions as a scheduler

A creative workaround — use GitHub Actions’ schedule trigger to call your endpoint:

name: Warm product cacheon: schedule: - cron: '0 */4 * * *' # Every 4 hoursjobs: warm-cache: runs-on: ubuntu-latest steps: - name: Trigger cache warm run: | response=$(curl -s -o /dev/null -w "%{http_code}" \ -X POST https://yourapp.com/api/warm-cache \ -H "Authorization: Bearer ${{ secrets.CRON_TOKEN }}" \ -H "Content-Type: application/json")

if [ "$response" != "200" ]; then echo "Cache warm failed with status $response" exit 1 fi echo "Cache warm succeeded"Trade-offs

Pros:

- Free for public repos (2,000 minutes/month free for private repos)

- No infrastructure to manage

- Good enough for prototyping

Cons:

- Timing is unreliable — GitHub explicitly notes that scheduled workflows may be delayed or skipped during high load. Delays of 5-30 minutes are common; longer gaps happen during peak CI/CD hours

- No way to know if a job ran or failed without checking the Actions tab

- Competes with your actual CI/CD pipeline for runner capacity and minutes

- No retries, no alerting, no execution logs

Fine for a side project or non-critical tasks like cache warming. Don’t rely on it for anything that needs to run on time, every time.

Option 4: External HTTP scheduler

Instead of making your serverless platform handle scheduling, offload it to a dedicated service. An external scheduler hits your HTTP endpoint — essentially a webhook call (see webhooks vs APIs for how this differs from polling) — on a cron schedule. It doesn’t care if your backend is serverless, a VPS, or a Kubernetes cluster.

How it works

- You give the scheduler a cron expression and an endpoint URL

- The scheduler sends an HTTP request to your endpoint at each interval

- Your serverless function spins up, handles the request, and shuts down

- The scheduler logs the response, retries on failure, and alerts you if something breaks

This is the approach that scales best when you have scheduled tasks spread across multiple services. Instead of managing Vercel cron configs in one project, EventBridge rules in another, and a GitHub Actions workflow for a third, you have one dashboard with every schedule, its history, and its status.

Example: the same product sync, triggered externally

Your API route stays almost identical to the Vercel cron example above. The only difference is how you authenticate the incoming request:

import { NextResponse } from 'next/server';import { db } from '@/lib/db';

export const maxDuration = 60;

export async function POST(request: Request) { // Verify the request is from your scheduler const authHeader = request.headers.get('authorization'); if (authHeader !== `Bearer ${process.env.SCHEDULER_TOKEN}`) { return NextResponse.json({ error: 'Unauthorized' }, { status: 401 }); }

const response = await fetch('https://supplier-api.example.com/v2/products', { headers: { 'X-API-Key': process.env.SUPPLIER_API_KEY! }, });

if (!response.ok) { // Return 5xx so the scheduler knows to retry return NextResponse.json( { error: `Supplier API returned ${response.status}` }, { status: 502 } ); }

const products = await response.json(); let updated = 0;

for (const product of products.data) { await db.product.upsert({ where: { supplierId: product.id }, update: { name: product.name, price: product.price, stock: product.stock_quantity, updatedAt: new Date(), }, create: { supplierId: product.id, name: product.name, price: product.price, stock: product.stock_quantity, }, }); updated++; }

// Return 200 with details — the scheduler logs this response return NextResponse.json({ synced: updated, timestamp: new Date().toISOString(), });}The key difference: when the supplier API is down, you return a 502. The external scheduler sees the non-2xx response, waits, and retries with backoff. With Vercel cron, that failure is just gone.

Trade-offs

Pros:

- Platform-agnostic — works with any backend that has an HTTP endpoint

- Built-in retries with exponential backoff

- Failure alerts when consecutive runs fail

- Centralized dashboard for all your scheduled jobs across every service

- No vendor lock-in to a specific cloud provider

Cons:

- External dependency

- Your endpoint needs to be publicly accessible (or accessible via a fixed IP/VPN)

Cost comparison

Here’s what each approach actually costs for a typical workload: 100 scheduled tasks running hourly (roughly 73,000 executions/month).

| Approach | Monthly cost | What’s included |

|---|---|---|

| AWS EventBridge + Lambda | ~$0.10 | Both services have generous free tiers that cover this volume |

| Google Cloud Scheduler + Cloud Run | ~$0.30 | 3 free scheduler jobs; $0.10/job/month after. Cloud Run per-request pricing |

| Vercel Cron (Pro plan) | $0 incremental | Requires Pro plan at $20/mo/member. Cron included, no extra charge |

| GitHub Actions (private repo) | ~$5-8 | Each run uses ~1 min of compute. 2,000 free minutes, then $0.008/min |

| Recuro (external scheduler) | $8/mo (Starter) | Includes 50 schedules, retries, alerts, and execution logs |

The cheapest option isn’t always the best. The hidden cost is the hours spent debugging a silent failure at 2 AM — the data sync that stopped running, the invoices that didn’t generate, the cache that went stale. A scheduler with built-in alerts pays for itself the first time you catch a failure before a customer notices.

Which approach should you pick?

The common case: Next.js / Nuxt on Vercel or Netlify

Most people reading this have a frontend framework deployed on Vercel or Netlify and need to add scheduled tasks. Here’s the decision path:

Start with Vercel/Netlify cron if:

- You have 1-3 simple schedules (data sync, cache warm, cleanup)

- You’re on a Pro plan already

- You don’t need retries or failure alerts

- All your scheduled endpoints live in this one project

Move to an external scheduler when:

- You need retries — your upstream API is flaky and you can’t afford missed runs

- You need alerts — you want to know immediately when a sync fails, not discover it hours later

- You have schedules across multiple services (a Vercel app, an AWS Lambda, a standalone API)

- You need runs more frequent than your plan allows

The full decision matrix

| Scenario | Recommendation |

|---|---|

| 1-3 simple schedules on Vercel/Netlify (Pro plan) | Platform cron config — it’s already there |

| AWS-native app with IaC pipeline | EventBridge + Lambda with CloudWatch Alarms |

| Multiple services across different platforms | External HTTP scheduler |

| Need retries, alerts, and execution logs | External HTTP scheduler |

| Quick prototype or side project | GitHub Actions (but switch before production) |

| High-frequency schedules (every minute) on free tier | External HTTP scheduler (platform cron free tiers are too restrictive) |

Recuro: scheduling for serverless teams

Recuro is an external HTTP scheduler built for exactly this use case. Point it at any endpoint — Vercel API route, Lambda function URL, Cloud Run service, or any public URL — and it handles the scheduling, retries, and monitoring.

- Any cron expression — from every minute to once a year

- Automatic retries with exponential backoff when your endpoint returns a non-2xx response

- Failure alerts when consecutive runs fail, so you know before your users do

- Execution logs for every request — status code, response time, and response body

- No infrastructure — it’s a managed service, so there’s nothing to deploy or maintain

If you’ve outgrown vercel.json cron configs or you’re tired of managing EventBridge rules across multiple AWS accounts, give it a try.

Frequently asked questions

Can I run cron jobs on Vercel's free tier?

Yes, but with significant limitations. Vercel's Hobby plan allows cron jobs with a minimum interval of once per day. For more frequent schedules (down to once per minute), you need the Pro plan at $20/month per team member.

How do I handle cron job failures in a serverless environment?

Platform cron features (Vercel, Netlify) don't retry failed runs. AWS EventBridge supports retry policies with up to 185 attempts and dead-letter queues. External HTTP schedulers like Recuro provide automatic retries with exponential backoff and failure alerts out of the box.

What's the most portable way to schedule tasks without a server?

An external HTTP scheduler. It calls your endpoint on a schedule regardless of where it's hosted — Vercel, AWS, Google Cloud, or a simple VPS. This means you can migrate your infrastructure without changing your scheduling setup.

Is GitHub Actions reliable enough for production cron jobs?

No. GitHub Actions scheduled workflows can be delayed by 5-30 minutes or skipped entirely during high load. GitHub themselves document this limitation. Use it for prototyping or non-critical tasks, but switch to a dedicated scheduler before going to production.

Can serverless cron jobs run longer than the function timeout?

Not directly. If your task exceeds the platform's timeout (e.g., 10 seconds on Vercel Hobby, 15 minutes on AWS Lambda), it will be killed. For long-running tasks, break the work into smaller chunks, use a queue to process items in batches, or move the heavy processing to a service with longer execution limits like AWS Fargate or Cloud Run.